Thursday Jun 04, 2026

Thursday Jun 04, 2026

Thursday, 26 February 2026 04:00 - - {{hitsCtrl.values.hits}}

Introduction: Why AI governance matters now

Artificial Intelligence (AI) is no longer a futuristic concept—it is already embedded in decision-making across finance, healthcare, logistics, education, public administration, and national security. While AI offers immense productivity gains, efficiency, and innovation, it also introduces significant ethical, legal, operational, and security risks.

Artificial Intelligence (AI) is no longer a futuristic concept—it is already embedded in decision-making across finance, healthcare, logistics, education, public administration, and national security. While AI offers immense productivity gains, efficiency, and innovation, it also introduces significant ethical, legal, operational, and security risks.

As AI adoption accelerates globally, AI governance has emerged as a critical mechanism to ensure that AI systems are safe, ethical, transparent, and accountable. Countries that fail to establish robust AI governance frameworks risk data breaches, reputational damage, regulatory non-compliance, and erosion of public trust.

For Sri Lanka, which is actively pursuing digital transformation and e-governance initiatives, the absence of a structured AI governance framework could become a strategic vulnerability rather than a development advantage.

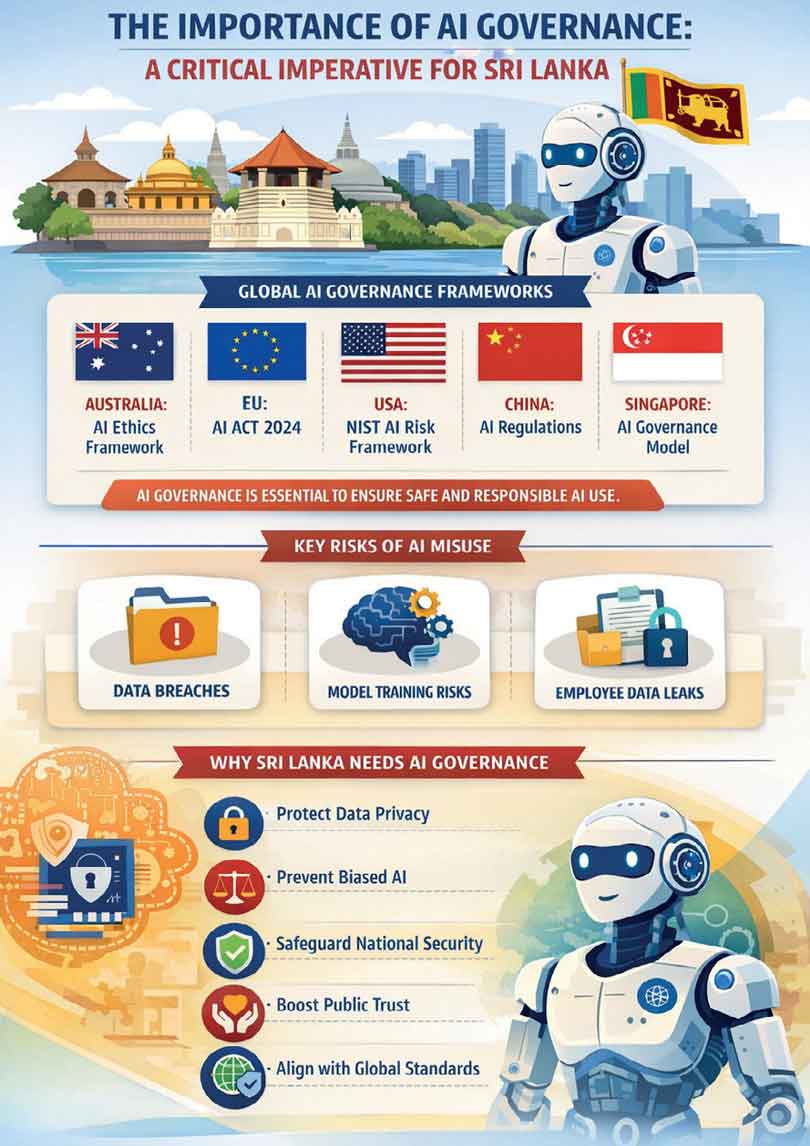

Global AI governance landscape: Lessons for Sri Lanka

Many countries have already recognised the urgency of governing AI responsibly and have introduced national or regional frameworks.

Established International AI Governance Frameworks

Australian AI Ethics Framework

Australian AI Ethics Framework

Focuses on principles such as fairness, transparency, accountability, privacy protection, and human-centred AI.

European Union AI Act (2024)

European Union AI Act (2024)

Introduces a risk-based approach, classifying AI systems into unacceptable, high-risk, limited-risk, and minimal-risk categories, with strict compliance obligations for high-risk applications. The AI Act is a European regulation on artificial intelligence (AI) – the first comprehensive regulation on AI by a major regulator anywhere.

NIST AI Risk Management Framework (USA)

NIST AI Risk Management Framework (USA)

Provides a voluntary but structured framework to identify, assess, manage, and govern AI-related risks across the AI lifecycle.

China AI Governance Framework

China AI Governance Framework

Strongly emphasises state control, algorithm accountability, content moderation, and alignment with national security priorities.

Singapore Model AI Governance Framework

Singapore Model AI Governance Framework

Widely regarded as a practical, business-friendly framework focusing on explainability, transparency, human oversight, and responsible deployment.

ISO/IEC AI Standards

ISO/IEC AI Standards

Including ISO/IEC 23894 (AI risk management) and ISO/IEC 42001 (AI management systems), which provide globally recognised governance and control structures.

Key takeaway:

AI governance is no longer optional—it is becoming a regulatory expectation and competitive differentiator.

Key risks associated with AI usage

While AI improves efficiency, unmanaged AI usage exposes organisations to serious risks:

1. Data confidentiality and security risks

One of the most critical risks arises from employees uploading sensitive information into public AI platforms such as ChatGPT.

Real-World Incident: US Government Data Exposure (2026)

In early 2026, a senior official at the Cybersecurity and Infrastructure Security Agency (CISA) uploaded “For Official Use Only” government contracting documents into a public version of ChatGPT for policy review purposes.

The incident triggered automated internal security alerts

The incident triggered automated internal security alerts

The uploaded data was sensitive but unclassified

The uploaded data was sensitive but unclassified

It represented a direct breach of internal security protocols

It represented a direct breach of internal security protocols

The official involved was acting CISA official Madhu Gottumukkala

The official involved was acting CISA official Madhu Gottumukkala

This incident highlights how well-intentioned productivity use of AI can result in severe governance failures.

2. Data exposure and model training risks

When data is submitted to public AI models:

The data may be stored

The data may be stored

It may be used to train future models

It may be used to train future models

Sensitive information could resurface indirectly in future AI-generated responses

Sensitive information could resurface indirectly in future AI-generated responses

Even if identifiers are removed, contextual or proprietary knowledge leakage remains a significant concern.

3. Widespread organisational risk

Research by Cyberhaven indicates: 8.6% of employees have pasted company data into ChatGPT, with 11% of all data pasted being classified as confidential. More concerning, 4.7% of employees have pasted sensitive data, including source code, client information, and strategic documents. (Cyberhaven, 2025)

This demonstrates that AI-related data risk is systemic, not accidental or isolated.

The case for organisational-level AI governance policies

To mitigate these risks, organisations must move beyond ad-hoc controls and establish formal AI governance policies.

Essential organisational AI controls

Clear policies defining what data can and cannot be uploaded to AI tools

Clear policies defining what data can and cannot be uploaded to AI tools

Mandatory data classification awareness

Mandatory data classification awareness

Approval processes for AI usage in sensitive functions

Approval processes for AI usage in sensitive functions

Employee training on AI ethics and data responsibility

Employee training on AI ethics and data responsibility

Clear accountability for AI-related breaches

Clear accountability for AI-related breaches

Secure AI alternatives and mitigation measures

While individual users can:

Disable chat history- Users can turn off chat history so their conversations are not saved or used to train the AI.

Disable chat history- Users can turn off chat history so their conversations are not saved or used to train the AI.

opt out of data training- Users can choose not to allow their inputs to be used to improve future AI models, helping to reduce data reuse.

opt out of data training- Users can choose not to allow their inputs to be used to improve future AI models, helping to reduce data reuse.

These measures are insufficient at enterprise or government level.

Many organisations are therefore adopting:

Microsoft Azure OpenAI Service with enterprise agreements

Microsoft Azure OpenAI Service with enterprise agreements

Private or on-premise AI models

Private or on-premise AI models

Contractual guarantees on data isolation and non-training

Contractual guarantees on data isolation and non-training

These solutions allow organisations to leverage AI benefits without compromising confidentiality.

Why Sri Lanka urgently needs a National AI governance framework

Sri Lanka’s increasing use of AI in:

Banking and financial services

Banking and financial services

Public sector digitalisation

Public sector digitalisation

Education and professional training

Education and professional training

Logistics, healthcare, and telecom

Logistics, healthcare, and telecom

…makes the absence of a national AI governance framework a significant policy gap.

Key risks for Sri Lanka without AI governance

Data privacy violations (especially under the Personal Data Protection Act)

Data privacy violations (especially under the Personal Data Protection Act)

Unethical or biased AI decision-making

Unethical or biased AI decision-making

Loss of public trust in digital government initiatives

Loss of public trust in digital government initiatives

Reputational damage in international partnerships

Reputational damage in international partnerships

Regulatory misalignment with global markets

Regulatory misalignment with global markets

Recommended AI governance directions for Sri Lanka

Sri Lanka should consider:

1. A National AI Governance Framework aligned with:

2. Sector-Specific AI Guidelines

3. Mandatory AI Usage Policies for public institutions

4. Enterprise-Level AI Governance Requirements

5. Capacity Building

Conclusion: Governing AI to unlock its true value

AI governance is not about restricting innovation—it is about enabling sustainable, trusted, and responsible AI adoption.

The 2026 CISA incident demonstrates that even advanced economies are vulnerable without strong AI governance. For Sri Lanka, proactive action today can prevent costly failures tomorrow.

By learning from global best practices and tailoring them to local realities, Sri Lanka can position itself as a responsible AI adopter, safeguarding data, protecting citizens, and enhancing economic competitiveness in the AI-driven world.

(The author is a Senior Chartered Accountant with over 20 years of professional experience, primarily in the banking sector, and is currently serving as AGM– Audit at one of the country’s leading banks. He is also a CISA-certified auditor (ISACA), holds professional qualifications in Artificial Intelligence, and serves as a visiting lecturer at PIM, CA Sri Lanka, and IBSL)