Saturday Jun 06, 2026

Saturday Jun 06, 2026

Thursday, 9 January 2020 01:02 - - {{hitsCtrl.values.hits}}

This article is based on the keynote speech delivered by Dr. Rukshan Batuwita at the SLASSCOM AI Asia Summit 2019 held in November at Shangri-La Colombo

Businesses are on the lookout for new tools to pursue business growth, and create new revenue streams. Data science and machine learning are a perfect fit, especially for those businesses that look for answers to questions requiring in depth analysis of data, insights and predictions.

In this backdrop, Dr. Rukshan Batuwita elaborates on the best practices to follow in building an effective end to end data product to enhance business growth.

What is a data product?

A data product uses data to achieve an end goal. Data scientists use analytical methods such as machine learning or deep learning to analyse gathered data and come up with a solution to a defined problem. But, data science and machine learning is not a magic formula. The process of building an end to end data product is an iterative and complex one.

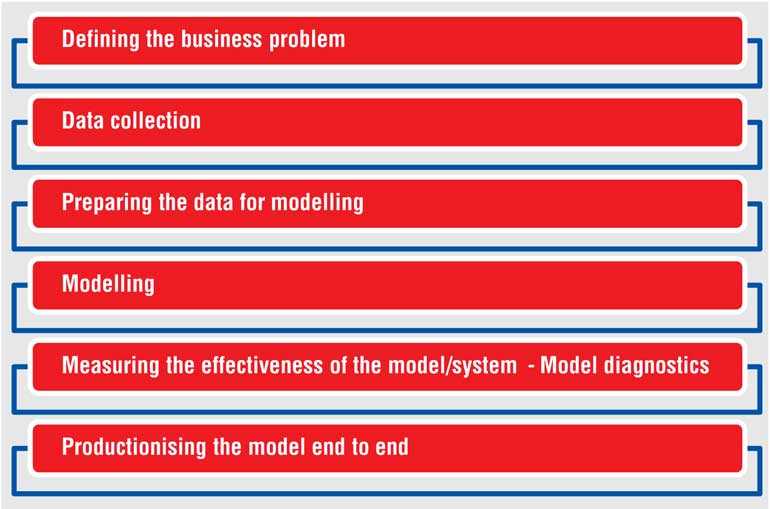

Building a data product – A snippet

The foremost step to building a data product involves defining a business problem. Companies need to clearly and precisely outline expectations from the project. This will help data scientists zero in on the right methodology as well as inputs to build the data model.

The next step is to collect data. Once the data is ready, the process of preparing data for modeling follows. Data scientists have to carefully plan to build the right kind of data model to help make critical business decisions.

The next step involves modeling application followed by steps to measure the effectiveness of the model or the system. Ultimately, if the model is effective, then follows productionising the model end to end.

Defining the business problem

Data science and machine learning are exciting. Companies are eager to implement a machine learning system to achieve business goals. But, it is important think deeply about why the company needs a machine learning product and AI strategy. To determine the solution that needs to be built, the company needs to carefully consider the problem that needs solving.

It is equally important to have the right amount of data and ultimately the required resources including the right skills, hardware and software support, time and people.

Moreover, a successful data science product pivots on real life applicability. Prior to building the solution, the company and all parties involved should consider their capacity to maintain the solution with constant upgrades, data collection, etc.

Designing the data solution

Data scientists can approach the designing stage in three steps.

First of all, it is important to set a quantifiable objective to achieve through building the data solution. Once they have access to a well-defined business problem, data scientists focus on converting this problem into a machine learning problem. Finally, they look into the real world constraints in implementing the product.

For example, available data may be biased in a particular case. It is imperative to go deep in to details and real world challenges before making low level decisions.

Data collection and preparation

Once data scientists come up with a solution, they then look into data collection. There are two types of data, experimental data and observational data. A word of warning, observational data could often be biased, coloured by perceptions outside of reality. Biases can lead to decisions that are unfair to customers.

Once the data is in place, data scientists focus on preparing the data for analytics or machine learning. In case of building a deep learning model with unstructured data, data scientists spend 20% of effort in data preparation and 80% in data modelling. The trick is to learn features from unstructured data and the model. This is the deep learning model.

The opposite method is true in the case of building a model based on unstructured data. In such situations, data scientists spend 70 to 80% of their time preparing data and about 10 to 20% of their time on model building.

Data preparation steps

Real world data sets are messy. Often, these data are collected through different systems which lead to varying definitions. Hence, the first step in data exploration should be cleaning. Data scientists should be ready to get their hands dirty as they immerse in data. It is important to look at data in different angles to comprehend the data. This is the key to getting a good data set.

Feature engineering is paramount. Well-structured data lead to the accuracy of the model.

Once the machine learning data set is ready, data scientists explore those sets too to identify potential problems and avoid garbage in, garbage out situations.

In simple terms, machine learning algorithms are not intelligent. These algorithms can map inputs and outputs. It is up to data scientists to provide machine learning ready data to get accurate outcomes.

Modelling

In the modelling stage, it would be a mistake to pick an algorithm that does not suit the actual problem. Data scientists should pick the algorithm based on the specific characteristics of the data sets, ultimate objective and resource constraints.

A best practice in creating data products would be starting with a simpler model such as a linear model. Afterwards, it is possible to move into more complex, non-linear models.

Model diagnostics

Data scientists start with assumptions before selecting the best suited algorithm to obtain the desired solution. Model diagnosis allows them to test and validate the assumption. It is important to conduct diagnostics of the model before putting the model in to production.

During model diagnostics, data scientists can extract useful insights from the model. The process reveals important information about important features of the model and correlations between those.

Testing and validating

Once data scientists go through each of these stages, finally it is important to test the model. While it is essential to test the model before productionising it, regular testing and validating are also important. Vital assumptions of a model could change driven by external factors.

Data scientists should consistently iterate on models to make sure that the system remains relevant to evolving business landscapes.