Tuesday May 19, 2026

Tuesday May 19, 2026

Monday, 1 December 2025 01:37 - - {{hitsCtrl.values.hits}}

The creation of deepfakes by using GenAI-assisted tools has been easy, cheap, and accessible to everyone

Scammers have not stopped their deceitful game and are continuing it with new deepfakes cloning the voices of other known personalities and forged textual messages of leading entrepreneurs and public servants. One such entrepreneur in whose name a deepfake has been created has been the e-commerce giant Dulith Herath of Kapruka fame. In the apparently fake advertisement that promotes the scheme, Herath’s cloned voice says that his investment platform uses ‘advanced trading algorithms’ to ensure a stable income for the investor who does not have to spend time for learning complex financial instruments

Scammers have not stopped their deceitful game and are continuing it with new deepfakes cloning the voices of other known personalities and forged textual messages of leading entrepreneurs and public servants. One such entrepreneur in whose name a deepfake has been created has been the e-commerce giant Dulith Herath of Kapruka fame. In the apparently fake advertisement that promotes the scheme, Herath’s cloned voice says that his investment platform uses ‘advanced trading algorithms’ to ensure a stable income for the investor who does not have to spend time for learning complex financial instruments

Bad side of GenAI

Bad side of GenAI

Generative Artificial Intelligence or GenAI has been a useful tool for people to create new content, such as text, images, and or music, by learning the patterns from vast datasets. Quite different from the traditional AI that analyses or categorises existing data, GenAI models produce novel and original output in response to prompts of users. This is achieved through complex algorithms that predict what the next word, sound or pixel should be, and that prediction is based on the past patterns. Therefore, it still cannot accommodate the unexpected or events that happens at random. However, it has applications ranging from content creation for easy learning or effective marketing to medical research where it can be used for drug discovery, clinical trials, or biomarker identification for diseases. Thus, users have been able to create new texts, images, audios, and videos which can be used for productive purposes. However, its free availability has helped misusers to create content for ulterior purposes like penetrating the security measures of financial institutions and robbing the moneys of bank customers. One such use has been the creation of fabricated images, videos, or audios of a person saying or doing something which he never did. In the parlance of industry, this is known as ‘deepfake’ creations. These forged creations can be used for malicious purposes like spreading misinformation, creating contents involving disinformation, committing financial frauds, or simply defaming the character of known personalities.

Use of GenAI to dupe gullible people in Sri Lanka

As I have mentioned in a previous article in this series, a major financial scam has been created in Sri Lanka recently by cloning the voices of the President1, Prime Minister, and leading businesspersons to lure gullible citizens to invest in a fake investment project.2 Scammers posting their deepfakes on Facebook had promised, through the cloned voices of these Government leaders, a high return every month if they invest a small sum in a capital market project which they say has been approved by the Sri Lanka Government. Though the initial deepfakes are no more in Facebook, the scammers have not stopped their deceitful game and are continuing it with new deepfakes cloning the voices of other known personalities and forged textual messages of leading entrepreneurs and public servants. One such entrepreneur in whose name a deepfake has been created has been the e-commerce giant Dulith Herath of Kapruka fame.3 In the apparently fake advertisement that promotes the scheme, Herath’s cloned voice says that his investment platform uses ‘advanced trading algorithms’ to ensure a stable income for the investor who does not have to spend time for learning complex financial instruments. The moneys are invested by the investment platform automatically through the AI generated analysis of the stock and cryptocurrency markets. In another such fake advertisement that had used the official logo of DailyMirror Online, even the Central Bank Governor Dr Nandalal Weerasinghe had been quoted to have endorsed the dubious investment scheme. This is despite the Central Bank Governor warning the public of such deepfake scams using his name or voice in March 2025.4

FinCEN alert

Deepfake has entered Sri Lanka targeting the gullible public. However, its more disastrous damage has been the attack on financial institutions which presently rely on biometric data, personal information, and one-time passcodes or OTPs to identify the genuineness of their customers. Deepfakes have successfully penetrated all these security measures. It is, therefore, necessary for financial regulators and financial institutions to protect themselves from such attacks as warned by the US Treasury Department’s Financial Crimes Enforcement Network or FinCEN in an alert issued in September 2024.5 The alert under reference has been to help financial institutions identify fraud schemes associated with the use of deepfake media created with GenAI tools. It has explained the similarities in those scams, provided red flag indicators for such scams, alerted the institutions concerned, and reminded them of the need for prompt reporting of such scams under the US Bank Secrecy Act or BSA.

A concern for financial institutions and Governments

Deepfake videos are high quality man-made content that resembles the reality. This resemblance to reality is the main reason for the public to believe them as authenticated. However, it is not the people who are now deceived. It is the computer systems that have been setup to protect the safety of banking transactions and thereby the moneys belonging to customers. This is despite the fact that the leading developers of GenAI taking all the precautions to keep the prospective misusers at bay through continuous oversight and control systems. But the criminals who have the intention of robbing other people’s money are also engaged in developing methods to evade and circumvent the safety measures introduced by the genuine developers. This has been facilitated by the open-source availability of these tools to anyone wishing to use them. As a result, those who have the intention of using them for criminal activities can produce such deepfakes at a lower cost and faster than the genuine developers. This is a real threat to financial institutions which believe that they have impenetrable digital systems to thwart the potential scammers. Thus, it is also a threat for the regulators which should safeguard the interests of the bank customers, on one side, and adopt a national policy to prevent both AML and CFT.

Deepfake has entered Sri Lanka targeting the gullible public. However, its more disastrous damage has been the attack on financial institutions which presently rely on biometric data, personal information, and one-time passcodes or OTPs to identify the genuineness of their customers. Deepfakes have successfully penetrated all these security measures

Deepfake has entered Sri Lanka targeting the gullible public. However, its more disastrous damage has been the attack on financial institutions which presently rely on biometric data, personal information, and one-time passcodes or OTPs to identify the genuineness of their customers. Deepfakes have successfully penetrated all these security measures

Criminals liberally using GenAI tools

FinCEN has found that criminals have used GenAI tools to create content that can compromise the customer identity verification processes of financial institutions. Those creations have enabled the criminals to deceive the computer systems of financial institutions by falsifying documents, photographs, or videos and thereby breaking the customer due diligence or CDD controls embedded in those computer systems. The commonly altered or newly created images have been the photographs found in commonly available documents like driving licenses, passports, identity cards or simply in social media like the Facebook or the Instagram. When they are used in combination with stolen personal identification information relating to customers, it is natural that even the best protected digital banking system can be duped. When it comes to AML or CFT, the tactic of the criminals has been to open false accounts with banks by using fake identities produced through GenAI tools to receive and launder the moneys earned from other criminal activities. Those are known in banking terminology as ‘funnel accounts’.

Duping employees

It is the responsibility of the employees of financial institutions to use their due diligence to identify deepfake attempts by criminals. FinCEN in its alert has flagged nine such guidelines. While that is not an exhaustive list, it provides some useful guidance for employees to detect in advance attempts of compromising their security measures or duping them. The first is to use the eyeball test detect the obvious signs of alteration or creation of the photos of customers. The second is to be cautious when a customer presents an inconsistent multiple identity document. The third is the occasion when a customer logs in through a third-party webcam. Fourth, to careful when a customer declines to use multifactor authentication to verify his identity. Fifth, conducting a reverse-image look up or open-source search of an identity photo matching an image available publicly. Sixth, a customer’s photo or video is flagged by commercial or open source deepfake detection software. Seventh, the use of a GenAI software to flag whether a document or photo submitted by a customer is a deepfake. Eighth, look for whether a customer’s address or device data are inconsistent with his identity documents. Ninth, identifying whether a customer had had rapid transactions through his account. Bank employees should add new flags to this list as and when the situations change and criminals resort to new methods of hacking the digital banking systems.

Entrust report on identity frauds

Entrust report on identity frauds

Entrust, the global company specialised in identity-centric security solutions that protect people, devices, and data, has presented its 2025 Identity Fraud Report with the support of its subsidiary onfido outlining the modus operandi of deepfake creators to attack financial institutions.6 The report presents three key findings.7

A rise in digital manipulations

First, digital manipulation has replaced the frauds hitherto committed by forging physical documents. The Entrust report says that year 2025 marks as the first year in which this fraudulent technique has been widely used recording a historic 244% increase in its occurrence year over year. The culprit has been the easy and free availability of GenAI-assisted tools, shared methodologies among the users, and the rise of fraud-as-a-service that can be used by anyone wishing to attack the digital systems of financial institutions. Economically, it is the best way to rob people.

Use of GenAI tools to create fake videos

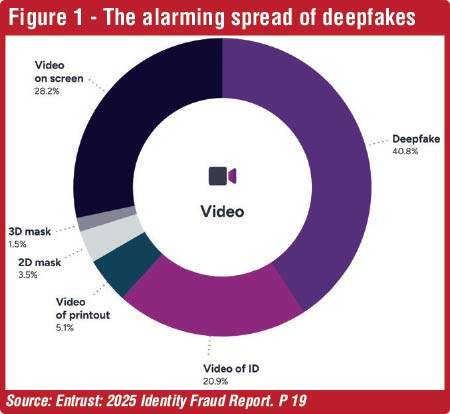

Second, of the digital manipulations, GenAI-assisted fraud is on the increase. The GenAI-assisted tools are increasingly used for creating convincing phishing emails or producing realistic deepfakes with apps that enable to swap the faces of real persons to created videos. There are also sites claiming to create realistic documents for use by prospective scammers. Of all the frauds, the Entrust report says that deepfakes now account for lumpy 40% of all biometric fraud.

More sophisticated frauds

Third, fraud is getting more sophisticated and more accessible to potential scammers. The sophistication has come from the use of GenAI, proliferation of deepfake videos, and techniques like injection attacks. In injection attacks, the scammer sends malicious data to an application, causing it to execute commands or reveal aunauthorised data. This happens when an application fails to properly validate or sanitise user input, allowing specially crafted data to be interpreted as code.

Compromising biometric safeguards

It has been found that scammers are increasingly using the forged videos to penetrate the biometric security measures installed by financial institutions. As the Entrust report has revealed in Figure 1, deepfakes have been the most prominent method of breaking the biometric security guards. Deepfakes are digitally manipulated videos or images where a person’s face is altered to appear as someone else. There are two types of using face images in this manner. One is the swapping of face by superimposing a new face onto a target head. In the basic method of swapping, a face is crudely pasted over another face creating a cheapfake. But sophisticated face swaps use GenAI tools to morph and blend a new face meticulously onto the target head deceiving even the computer systems. In the second one, fully generated images are used by using GenAI tools to produce extremely realistic images and videos of faces. This makes biometric safeguards vulnerable to scammers’ attacks.

Deepfakes bypassing security measures

How do the scammers use deepfakes to attack financial institutions. Evidence has shown there are four methods of doing so.8 In the first place, they can be used to open fraudulent accounts in names of unknown people. In this case, deepfakes are used to bypass the identity verification during a KYC onboarding process and open fraudulent accounts. This happens in video calls or online account opening processes by mimicking a person’s face, voice, and mannerism to deceive traditional biometric and human review systems involved.9 Second, deepfakes are created to solicit for phishing and investment scams. In this case, a celebrity appearing in the deepfake endorses a new investment scheme like the one presently in Sri Lanka with the voices of the President, Prime Minister, and leading entrepreneurs. These videos have been effective in convincing gullible people to hand over their money or personal data. Third, accounts are taken over scammers by using deepfakes to dupe biometric checks and gain aunauthorised access to existing accounts. Fourth, deepfakes are used to spread misinformation or disinformation to malign politicians, financial institutions, and leading entrepreneurs. In the case of financial institutions, it is done by rival institutions, or disgruntled employees, or simple mischief makers. Whatever the source, it becomes costly for the financial institution involved to reestablish its image in the market and sustain the trust of its customers.

It is not the people who are now deceived. It is the computer systems that have been setup to protect the safety of banking transactions and thereby the moneys belonging to customers. This is despite the fact that the leading developers of GenAI taking all the precautions to keep the prospective misusers at bay through continuous oversight and control systems. But the criminals who have the intention of robbing other people’s money are also engaged in developing methods to evade and circumvent the safety measures introduced by the genuine developers. This has been facilitated by the open-source availability of these tools to anyone wishing to use them

It is not the people who are now deceived. It is the computer systems that have been setup to protect the safety of banking transactions and thereby the moneys belonging to customers. This is despite the fact that the leading developers of GenAI taking all the precautions to keep the prospective misusers at bay through continuous oversight and control systems. But the criminals who have the intention of robbing other people’s money are also engaged in developing methods to evade and circumvent the safety measures introduced by the genuine developers. This has been facilitated by the open-source availability of these tools to anyone wishing to use them

Need for continuous vigilance

The creation of deepfakes by using GenAI-assisted tools has been easy, cheap, and accessible to everyone. However, they can be used by people with criminal intentions to attack financial institutions, penetrate their biometric safeguards, and rob moneys belonging to their customers. It has become a continuous battle between biometric safeguard developers and potential scammers to win over the other. At one stage, the biometric safeguard developers are on the top but at another, the scammers are pushing them down and win over them. What this means is that there should be continuous vigilance to keep these scammers at bay and maintain the safety and trust in financial institutions.

(The writer, a former Deputy Governor of the Central Bank of Sri Lanka, can be reached at [email protected] )

References:

1 https://www.facebook.com/share/1Fh9UxijN3/?mibextid=wwXIfr

2 https://www.ft.lk/columns/Be-warned-of-thieves-and-nuisance-makers-in-AI-space/4-781986

3 https://www.facebook.com/share/17xSLzNUG7/

4 https://www.centralbanking.com/technology/7972454/sri-lankas-governor-is-latest-to-feature-in-deepfake-scam

5 https://www.fincen.gov/system/files/shared/FinCEN-Alert-DeepFakes-Alert508FINAL.pdf

6 https://www.entrust.com/sites/default/files/documentation/reports/2025-identity-fraud-report.pdf

7 Ibid, p 6.

8 Ibid, p 21.

9 https://www.kaspersky.com/blog/how-deepfakes-threaten-kyc/51987/#:~:text=How%20fraudsters%20bypass%20customer%20identity,Let’s%20delve%20into%20the%20details.